Is your teen begging to start an Instagram or Snapchat account? Introducing kids to social media is a big deal because it can expose them to the broader digital world — and all the risks associated with it.

In this article, we’ll discuss how to introduce kids to social media and tips for helping them stay safe.

There are two primary factors to consider when deciding if your child is ready for social media: age and maturity.

Aside from a handful of apps designed for younger kids, such as Kinzoo and Messenger Kids, most social media platforms require users to be at least 13 years old. However, just because your child is technically old enough doesn’t mean they’re automatically ready for Snapchat (or TikTok, Instagram, or any of the other platforms).

If your 15-year-old isn’t mature enough for social media, you shouldn’t feel pressure to let them use it. But don’t keep them in the dark just because they’re not ready yet — it’s a good idea to start educating your child on how to safely use social media before you hand them the reins.

Once you've decided it’s time to let your teen use social media, here are some tips to get them going:

Explaining the risks of social media shows your teen why it’s important to behave responsibly online. It also helps them learn to spot danger — an important ingredient for lowering their risk.

We’ve covered many of these dangers, including:

We often think of teens as inevitably drawn to risk, but studies actually reveal that teens are often more cautious than their younger peers, choosing the safer option when given the information needed to make that choice.

To equip your teen with the ability to make safe choices on social media, teach them about:

Think of these tips as starting points. You’ll want to continuously check in with your child once they start using social media on their own.

As your child matures, it may be reasonable to give them increasing leeway in when and how often they use social media (within reason). But when they’re first starting out, it’s a good idea to create more stringent boundaries to help them learn appropriate limits.

Utilize the parental controls on the social media apps your child uses, as well as any built into their device.

The American Psychological Association recommends that parents monitor social media for all kids under 15, and depending on your child’s maturity level, it may be necessary to do so for longer. Here are some ways to stay involved:

Did you know? BrightCanary is a great way to give your child independence without compromising on safety because you get alerts when there’s a red flag … without having to look at everything your child does online.

By being proactive, parents can introduce social media to their child in a way that encourages them to be responsible and stay safe. Parents should educate their child on the risks of social media, teach them tips for staying safe, and remain involved in their child’s online activity.

BrightCanary gives you real-time insights to keep your child safe online. The app uses advanced technology to monitor them on the apps and websites they use most often. Download on the App Store today and get started for free.

Whether it’s difficulty completing homework, getting distracted in the middle of chores, or zoning out in class, you might notice your tween or teen struggling to stay focused. It’s a common problem that’s only made worse by ever-encroaching technology. That’s why we consulted the experts for these tips on how to improve focus in kids.

There are a number of reasons why a child may have difficulty getting or staying focused on a task.

Aside from the more obvious signs, like squirming in their seat or abandoning their homework, inattentiveness can also fly under the radar in some kids.

According to Anna Marcolin, LCSW, psychotherapist, life coach, and host of the globally top-ranked mental health and wellness podcast The Badass Confidence Coach, here are some less obvious signs your child may be struggling with focus:

“When talking to your child about their focus challenges, aim to open the conversation in a supportive and understanding way,” says Marcolin. Here are her tips for talking to your child about their attention struggles:

Marcolin points to these simple, evidence-based strategies for helping kids sharpen their focus:

Sometimes, a child’s attention issues rise to a level where outside intervention is necessary. Marcolin points to these signs that a professional assessment is warranted:

Technology distractions, stress, and mental health issues are some of the reasons tweens and teens might struggle with attention. Parents can help their distracted child by collaborating with them to identify the problem and develop healthy coping strategies.

If your child is spending a lot of time on their phone or tablet, stay involved and understand what content they’re consuming. BrightCanary is the best way for parents to monitor their children’s activities on Apple devices. Download the app and start your free trial today.

Catfishing is on the rise, and teens are an increasing target. Nearly two-thirds of young people report being targeted by catfishers. But what is catfishing, and how can you keep your teen safe? In this article, we’ll go over how teens get catfished, prevention tips, and what to do if your teen is a victim.

Catfishing is the act of setting up a fake online identity in order to deceive and control others. It typically involves convincing the victim that they are in a genuine romantic relationship or friendship with the perpetrator.

There are a number of reasons a catfisher might target teens, including:

Certain factors make teens particularly susceptible to catfishing, including common adolescent vulnerabilities and the online spaces where they tend to hang out.

Here are steps you can take to prevent your teen from becoming victims of this online scam:

Teach your child about the dangers of oversharing, the importance of not talking to strangers online, and the warning signs that they’re being groomed.

Talk to your child about what apps and websites are and aren’t okay for them to use and how you expect them to behave on the internet. Setting limits on when and where they can use their device can help you keep a better eye on their safety.

Use spot checks, regular digital check-ins, and parental monitoring tools like BrightCanary to keep an eye on your child online.

If you discover your teen is being catfished, here’s how to help them respond:

Teens are uniquely vulnerable to catfishing, and their victimization is on the rise. It’s important to educate your child on how to spot catfishing and steps they can take to prevent being targeted. It’s also important to monitor your child online so you can spot warning signs.

BrightCanary can help you keep tabs on your child’s online activity, including messages to suspicious numbers or potentially concerning interactions with people in their DMs. Download the app today and get started for free.

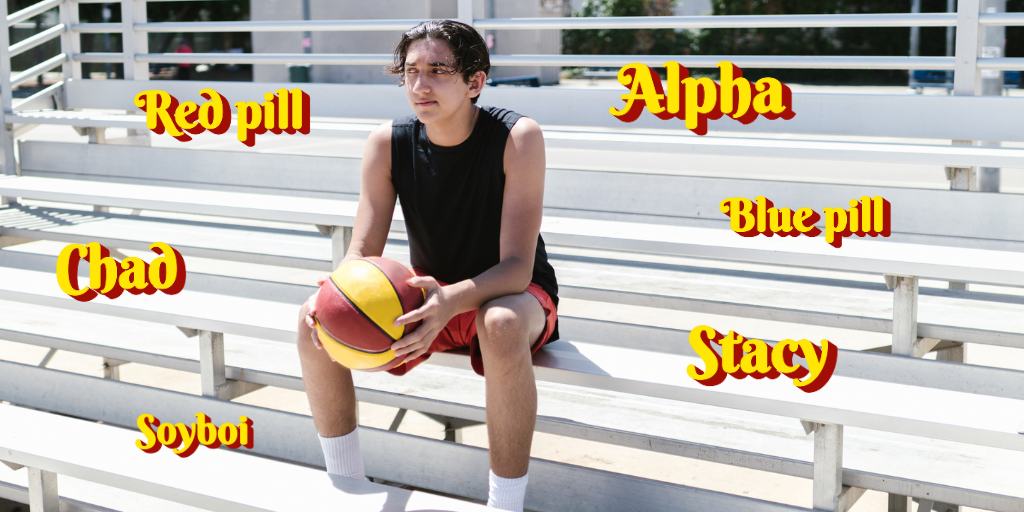

“Mason thinks he’s such a Chad, but he’s nothing but a beta cuck. He better red pill, or he’s never gonna land a Stacy.” If that sentence has you scratching your head, don’t worry. It just means you’re not fluent in the language of the manosphere.

It’s hard to deny the growing popularity of this toxic movement among adolescents, which has grown over the last several years and reached popular consciousness through Netflix’s Adolescence. Enter: this manosphere glossary.

Although your life might be happier knowing as little as possible about the manosphere, if you have a kid, you should familiarize yourself with some of its terms so you can spot whether your child is being influenced by it.

Alpha: Alphas are dominant men who overpower others and have their pick of sexual partners.

Awalt: Awalt is an acronym for All Women Are Like That. It represents the manosphere belief that women are predictable and stereotypical in the way they behave — for example, that they want to “marry up,” are manipulative, and only want to date Chads (see below).

Beta: Betas are the opposite of alphas. They’re men who are weak, unattractive, and submissive.

Chad: A Chad is a virile, uber-masculine, and powerful man who women flock to — aka an ultimate alpha. A “gigachad” is the most alpha of all the alpha males.

Cuck: Cuck is a shortening of “cuckold.” In the manosphere, it refers to a man whose wife has been unfaithful, particularly a beta whose female partner has been with an alpha male. It’s typically used as an insult and can also refer to men who derive sexual pleasure from allowing their wife to sleep with another man.

Incels: “Incel” is a mashup of “involuntary celibate.” Men who self-identify as incels are unable to find a sexual partner, despite feeling entitled to one, and blame women for their loneliness.

Pickup artist (PUA): Pickup artists share strategies to manipulate or coerce women into sex.

Red pill/blue pill: These terms have been co-opted from The Matrix. In the manosphere, a red pill is a person who has “woken up” to the fact that society actually discriminates against men, not women. A blue pill refers to a person who either has yet to realize this “fact” or actively works to convert red-pillers back to being so-called sheep.

Sigma male: As opposed to an alpha male or beta male, the sigma male is a lone wolf who operates outside of social structures. While not necessarily negative, the use of this term can indicate that your child is consuming content in or around the manosphere.

Sexual market value (SMV): In the messed-up universe of the manosphere, a person’s worth is measured by their sexual desirability, or their “sexual market value.”

Sexual marketplace (SMP): Lest you get the impression that the manosphere sees people as anything besides pieces of meat, I introduce to you the sexual marketplace, a metaphorical place where people flaunt themselves and compete for romantic and sexual partners.

Soy/soyboi: The term soy is taken from the soybean and means any perceived characteristic or behavior that isn’t manly enough. A soyboi is a male who lacks sufficiently manly traits.

Stacy: A Stacy is an idealized version of femininity, according to manosphere standards. They are ultra attractive, desirable, and promiscuous, but also vapid. Stacys are considered unattainable to any man who isn’t a Chad.

Succubus: Incels have taken this character from folklore and twisted it to mean a woman who gets her sexual needs met by betas without regard to the harm it may cause them.

Wizard: A wizard is a male over the age of 30 who has never had sex. The term can be used as both an insult (in the pickup artist community), or an honorific (in the incel community).

The manosphere is a toxic online movement filled with misogynistic terms. If you want to make sure your little Chad hasn’t taken the red pill and gone all Awalt with their belief system, it’s wise to familiarize yourself with this manosphere glossary.

For help monitoring your child online to keep them safe — and safely away from the manosphere — BrightCanary is your best option on Apple devices. The app uses advanced technology to scan your child’s activity online and sends you a notification when they encounter anything worrisome on the apps they use most. Download BrightCanary today to get started for free.

Want to stay on top of other terms teens use? Check out our emoji guide and common teen dating slang terms.

Much like YouTube, Netflix is a great place to find quality entertainment for kids. (Hello, Carmen Sandiego reboot!) But without the right settings, Netflix can expose your child to inappropriate content and even potential interactions with strangers.

Fortunately, Netflix parental controls give you a way to monitor your child’s viewing and reduce those risks. Here’s what you need to know about how to make Netflix safe for your child.

Even though Netflix is packed with great content, it also comes with potential risks:

Wondering how to set up Netflix parental controls? Here are some steps you can take:

It might seem easier to let your child use your Netflix profile, but adult profiles don’t have the same protections for kids. A Kids profile is ideal for kids ages 12 and under. Netflix Kids filters content, allows you to use parental controls, and disables access to Netflix Games.

Once their Kids profile is created, you can:

Netflix Kids profiles cap out at PG, so it’s appropriate for most teens to transition to a regular profile. Without parental controls, there are still steps you can take to help them view safely:

Follow these steps to set parental controls on Netflix:

For additional information on how to use Netflix parental controls, visit this guide.

Netflix is a great resource for child-friendly shows if you take appropriate steps to make your child’s viewing experience safe, such as setting up a Kids profile, using parental controls, and monitoring your child’s viewing.

BrightCanary helps parents monitor what their kids search on popular platforms, including streaming services like Netflix, as well as social media, text messages, and internet browsers. Download BrightCanary today to get started for free.

Does your kid talk nonstop about farming, mining, and foraging? Do they excuse themselves from dinner to tend to their chickens? If so, you might have a Stardew Valley player on your hands. But is Stardew Valley appropriate for kids?

Let’s get into why it’s a good pick for your child and what parents need to know about the game.

Stardew Valley is rated for kids ages 10+, according to the ESRB and Common Sense Media.

Older kids (and adults) can also enjoy Stardew Valley, which is often called a comfort game due to its slower-paced gameplay.

The language built into Stardew Valley is mild, but does include put-downs like “idiot” and swear-adjacent words like “damn.”

Users can join a multiplayer game, which can expose them to mature language from other players, who can make custom signs and send messages to each other.

So, the language in Stardew Valley is only as mature as your child and their friends decide to make it.

There are no explicit sex scenes in Stardew Valley, but there is some mature content parents should know about:

These will likely go over the heads of younger children, but older or more precocious kids could easily turn the game into a dating simulator.

There’s a case to be made for a safe outlet for roleplaying adult romantic relationships, but parents should be aware of this possibility.

As for nudity, the most your child will see is the occasional topless man or a woman in a bikini.

The violence in Stardew Valley is cartoonish and not graphic. Aside from the rare argument that leads to one player punching another, players don’t fight against each other or human non-player characters (NPCs). Instead, they battle non-human creatures.

The only weapons are swords and tools, not guns. When monsters die, they just disappear.

Every kid has a different threshold for what frightens them, but Stardew Valley isn’t scary by most standards. Because the graphics are rendered in a pixelated 2D style, the monsters are unlikely to be scary even for younger children.

There’s substance use (and abuse) in Stardew Valley.

A player can have their avatar drink, and some of the NPCs drink to excess, including one character whose entire story revolves around his alcoholism. To be fair, the negative impact drinking has on his life is made clear. There are also NPCs who smoke tobacco.

In addition to depictions of alcoholism, the game also touches on suicide.

The alcoholic NPC is open about his depression and sometimes lies on the edge of a cliff, begging players to give him a reason not to roll to his death below. It’s pretty heavy stuff, but the upside is the character also asks around about getting help for his depression.

Parents might want to use it as an opening for discussing mental health with their child.

No, Stardew Valley doesn’t have in-game parental controls. However, you can use the parental control settings on the platform your child plays, most of which allow you to set time limits and content filters.

Stardew Valley is available on desktop, Xbox, Playstation, Nintendo Switch, iOS, and Android. Here’s our guide to Nintendo Switch parental controls.

It’s common for kids to search for videos and fan content about the media they consume, including YouTube playthroughs and fan forums on Reddit. You can also use a monitoring app like BrightCanary to keep track of your child’s activity on their iPhone or iPad, including what they search on Google and YouTube.

The representation in Stardew Valley is a mixed bag.

The game only allows for two genders when selecting an avatar, and there are no varied body types aside from one slender avatar. Gender presentation and roles aren’t fixed; female avatars can grow beards, and same-sex marriages are allowed.

The majority of the villagers are white and able-bodied. One character is in a wheelchair, but his attitude toward his disability and ableist treatment from other NPCs are controversial. Players are given the option of calling out the ableism, but oddly, the game mechanics steer them away from this option.

It’s possible to play Stardew Valley remotely with others, but only in co-op mode. An invite code is needed to join someone’s game — there aren’t public games like in Roblox.

The only way your child could encounter strangers in the game is if they’re invited by a friend who also invites other people your child doesn’t know.

Stardew Valley is a safe, fairly tame game that’s appropriate for most kids over 10. It includes some mature themes such as alcoholism and suicide, and there are a decent amount of sexual innuendos, depending on what your child chooses to focus on in the game.

Parents will want to keep an eye on how their child plays Stardew Valley and consider using the mature themes as conversation starters. Check out our guide to other kid-friendly video games.

Kik may be the most popular teen messaging app that most parents have never heard of. Despite its popularity, concerns over predators, inappropriate content, and lack of parental controls raise major concerns about the platform. So, is Kik safe?

This article dives into the hidden dangers of Kik and steps parents can take to keep their child safe on the app.

At its core, Kik is a messaging app, but it includes additional features such as live video chats with strangers, public groups, and a dating section. These additional offerings make Kik feel like a hybrid between a messaging app, a social media site, and a chatroom.

There’s no question that Kik is popular with teens. In fact, one-third of all American teens use the app. Here are some of the reasons kids are drawn to Kik:

Many of the features that make Kik appealing to younger users also put those same users at risk. The app has drawn criticism from law enforcement and watchdog groups.

Hidden dangers of Kik include:

Any time users can hide behind anonymity, cyberbullying tends to follow, and Kik is no exception. Cases of cyberbullying are common on the app.

You only have to open the app to understand just how sexualized the platform is. When I tested it, nearly every account on the For You page featured users suggestively posed and often wearing very little clothing.

Reports of users being sent sexually explicit messages abound, and the app has been the subject of numerous lawsuits related to explicit material sent to minors and the distribution of child pornography.

The ability to hide their identity, combined with the emphasis on connecting with strangers, makes Kik an appealing app for sexual predators who use it to target and groom victims. The National Center on Sexual Exploitation (NCOSE) calls Kik a “predator’s paradise.”

Although Kik recently raised the minimum age to 18, the app includes no age verification (except the UK, where Kik must include age verification by law). The lack of age verification means many teens still use the app.

Kik has no parental controls. The only way to ensure your child’s safety on the platform is to use a monitoring app like BrightCanary.

The safest option is to not let your child use Kik at all. Swap it with one of these safe messaging apps for kids.

However, parenting is nuanced, and things are rarely absolute. If your child does use Kik, here are some steps you can take to keep them safe:

Because of numerous concerns, such as a lack of age verification, no parental controls, and a history of exploitation on the app, Kik is not safe for kids. However, if your child uses Kik, there are steps you can take to help them use the app more safely. This includes educating them about the risks, showing them how to block problematic users, and using a monitoring app.

BrightCanary helps parents monitor their children’s activity on the apps they use the most, including messaging apps like Kik and social media. Start your free trial today.

Many parents (myself included) hold rigid, outdated ideas when it comes to screen time limits for our kids. I write about kids and technology professionally, and I still find myself giving my kids strict, time-based limits for their screen time, even though I know that quality matters more than quantity.

That’s why I was intrigued when I came across the idea of intentional screen time and wanted to explore the concept.

But what is intentional screen time? And how can parents guide their kids toward healthier tech habits that will serve them for years to come?

Here’s what I found, including how to help your child practice intentional screen time and strategies for shifting your screen time policies from restriction to a guided approach.

Intentional screen time means being mindful of our device use and making deliberate decisions about it. It includes noticing what we’re doing on our screens and why, including what we’re giving up by being on our devices, and then shaping our habits to reflect our goals and values.

Employing these concepts will help you shift your approach to your child’s screen time.

Here are questions to ask yourself and your child (and to teach them to ask themselves) in order to evaluate the quality of their screen time:

The older a child, the more direct conversations you can have with them about intentional screen time and the more involved they can be in assessing their own device use. For younger kids, parents will need to be more involved. Kids of all ages benefit from seeing their caregivers engage thoughtfully with technology.

Here are some ways you model intentional screen time:

Here are some tips for shifting from a parenting model of restriction to one of guidance when it comes to screen time.

From no devices at the dinner table to shutting down for family game night, think about what’s important in your household and set guidelines accordingly.

For example, you might all take part in these screen-free activities your family can do before bedtime, like listening to music or prepping lunches for the next day.

Keep an eye on what your child does on their device, through conversation and monitoring. Knowing what they’re up to will help inform how you guide them toward more intentional screen time.

Don’t just introduce the idea of intentional screen time once and then be done. It’s an ongoing process. Talk about what you notice and encourage your kids to share with you how it’s going for them.

Helping your child make intentional decisions about their device use fosters a healthier relationship with technology that will serve them for years to come. You can teach your child how to evaluate their own screen time and how to make decisions that support their wellbeing. Staying engaged with how they use devices and model intentional screen time in your own behavior.

BrightCanary can help you supervise how your child spends their screen time. The app uses advanced AI technology to scan your child’s activity and sends you an alert when they encounter a red flag. Download today and get started for free.

Chances are, your child is already using Artificial Intelligence (AI). While AI has potential benefits like brainstorming ideas and helping generate study questions, the technology also presents risks such as false information, opportunities for cheating, and cyberbullying. That’s why it’s essential to help your child learn to use AI in a way that’s positive, productive, and ethical.

Here are some of the ways kids are already using AI, the pros and cons, and how to help them use it responsibly.

Many adults associate kids using AI with cheating on schoolwork. While that’s definitely a reality, it’s not as common as many assume, and it’s far from the only way kids use the technology.

Here are some other ways kids use AI:

Here are a few benefits to letting your child use AI:

Despite the benefits, there are significant concerns about AI that parents need to know:

You play an important role in helping your child learn to use AI responsibly. Here are some tips:

Ask your child how they use AI and the benefits and problems they find with it. In many cases, kids are already more critical and savvy users of AI than adults.

Rather than just taking AI at face value, kids need to learn to think critically about how they use it, the validity of the information it provides, and the biases it includes.

Outsourcing everything to AI can weaken the learning benefits your child gains from completing an assignment. Encourage them to try to solve a problem or brainstorm an idea on their own first before turning to AI for support.

Be clear that these AI habits are not okay:

Kids have the opportunity to carefully consider the ways they want (or don’t want) AI to be a part of their lives. Encourage them to actively question the role of AI in their life and work to find a balance that feels right for them.

Engaging with AI has potential benefits for kids, but it also comes with many risks. Parents need to talk with their child about AI and help guide them toward responsible use.

Curious about what your child is searching on AI platforms? BrightCanary is coming out with a new update that will monitor your child’s most-used apps, including what they prompt AI platforms. Download BrightCanary on the App Store and be the first to know about it.

Roblox is a popular online gaming platform where users can create open-world games for others to play. It also offers the ability to interact with other players through open chat. But are Roblox chats safe? And what parental controls can help protect your child?

Read on to learn how to set up Roblox chat parental controls and other steps you can take to keep them safe.

Roblox includes voice and text chat, allowing users to communicate both in and outside of games, either one-on-one or with groups.

Voice chat is available for users 13 or older with a verified phone number. Players must opt-in to use this feature, and it’s not available in all games.

Text chat falls into two categories:

Yes. If your child is under 13, you can use Roblox parental controls to:

To learn about the full slate of Roblox parental controls, check out this guide.

Roblox chats expose children to risks, but with the proper precautions, they can be safe. Here’s what you need to know.

Here are steps you can take to keep your child safe on Roblox chat.

If you’d rather your child not chat on Roblox at all, here’s how to block it in their account:

There are two primary ways you can view your child’s Roblox chat history:

1. Log into their account. Unfortunately, you can’t view your child’s chat history through the Roblox Parental Controls. You have to log directly into their account. Here’s how:

2. Use a monitoring app. BrightCanary uses advanced technology to scan your child’s activity online and alerts you when there’s an issue.

If you’d like to allow your child to chat on Roblox, but want to restrict who they can chat with, you can set their Party chat to Friends. (Experience chat only has the option to allow chats with everyone or no one.)

You can also block specific users from chatting with your child. Here’s how:

Roblox chats are a fun way for kids to connect with friends while playing games together. But they also expose kids to risks like messages from strangers and exposure to inappropriate language and content. To keep your child safe while using Roblox chat, set parental controls, talk to them about how to chat safely, and monitor their use.

BrightCanary helps you stay in the loop. This highly rated monitoring app alerts you to concerning content on the apps they use most. Download BrightCanary on the App Store and get started for free today.