Welcome to Parent Pixels, a parenting newsletter filled with practical advice, news, and resources to support you and your kids in the digital age. This week:

🧠 More than half of TikTok’s ADHD content is misinformation: Online platforms are flooded with misleading or unsubstantiated mental health content, according to new research. On TikTok alone, 52% of ADHD-related videos and 41% of autism videos were found to be inaccurate. YouTube averaged 22% misinformation on the same topics.

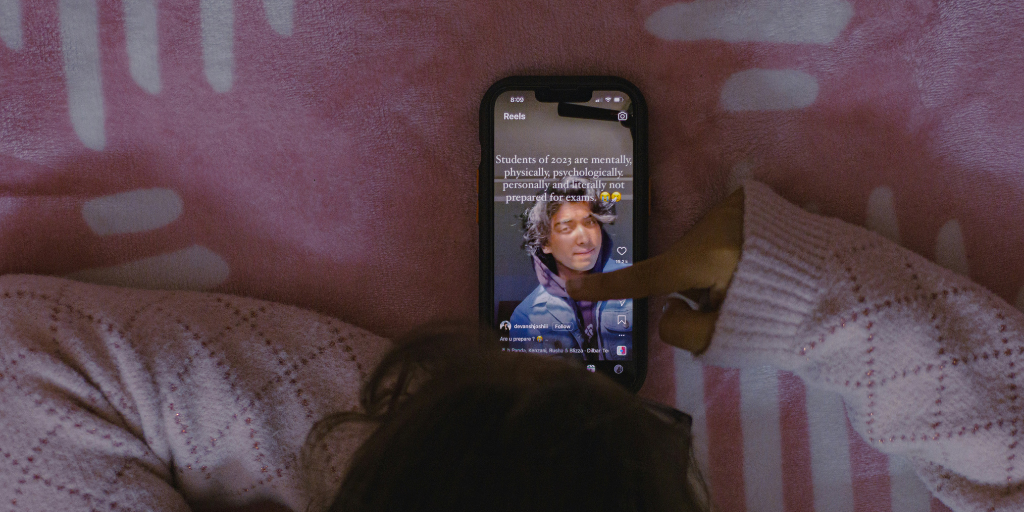

Content created by healthcare professionals was consistently more accurate, but professional voices represent only a small fraction of what's actually circulating on these platforms. (And that one influencer with the flashy editing and jump-cuts is way more engaging.) The content that spreads is the content that generates engagement, and emotionally resonant self-diagnosis videos do exactly that.

When teens absorb inaccurate information about mental health — especially about their own potential diagnoses — it can shape how they understand themselves, how they talk to doctors, and whether they seek the right kind of help. It can also normalize self-labeling in ways that feel affirming in the short term but complicate actual support down the road.

What parents can do: If your child brings home a TikTok-informed self-diagnosis, resist the urge to dismiss it outright. Instead, treat it as an opening: "That's interesting — what made you feel like that applies to you?" If the concern feels real, bring it to a professional rather than letting the algorithm be the final word.

🔞 OpenAI plans to introduce adult content to ChatGPT, but age-verification is already failing: OpenAI CEO Sam Altman announced that ChatGPT will begin allowing erotica for verified adults, with a rollout expected later this year. We’re not here to yuck anyone’s yum, but the concern — voiced loudly by, among others, billionaire Mark Cuban — is that the age-verification system isn’t there yet, , and kids will be the ones impacted most.

OpenAI’s age-verification system misclassifies minors as adults 12% of the time, and we’ve found that existing safety features on ChatGPT are a bust. Cuban’s perspective: "This isn't about porn. That's everywhere. Including here [on X]. This is about the connection that can happen and go into who knows what direction with some kid who used their older sibling's log in." (Case in point: Character.ai limited the way teens use its platform following lawsuits, but other explicit AI chatbot platforms like Polybuzz are thriving.)

For parents, the practical takeaway is the same one that applies to every platform that promises age-gating: the gate is not the protection. Your child's understanding of why certain content is harmful, and their ability to come to you when something feels wrong, is. BrightCanary monitors everything your child types across all apps, including ChatGPT — so if something concerning is happening, you'll know about it.

📺 Why harmful content keeps reaching kids — and what advertising has to do with it: There’s an economic reason for why platforms keep serving harmful content to kids, according to researchers writing in The Conversation: recommendation algorithms are designed to maximize engagement, not to distinguish between helpful and harmful content. And emotionally charged content (that which provokes fear, anxiety, outrage, or shock) consistently generates more engagement than neutral material.

Because many social platforms are funded by advertising revenue, and advertising revenue depends on attention, the incentive to serve that content never goes away, regardless of what a platform's safety team is doing on the other side of the building. That’s one of the reasons the same issues keep recurring across different platforms and years, and why parental involvement remains essential regardless of what any platform promises. Curious to learn more? We've written about how social media algorithms work and how to talk to your kids about them.

Parent Pixels is a biweekly newsletter filled with practical advice, news, and resources to support you and your kids in the digital age. Want this newsletter delivered to your inbox a day early? Subscribe here.

Bullying doesn't always look like name-calling. Online, it can be subtler … and harder for kids to name. Use these conversation starters to check in. That last question is the most important one to get an honest answer to.

🔐 Kids aren't learning cybersecurity in school — but parents can fill the gap. Save these five practical ways to teach kids digital security at home, from modeling good habits yourself to teaching them to question what they see.

📋 Pinterest CEO Bill Ready is backing a social media ban for kids under 16. “As both a CEO and a parent, I believe we need to be honest: social media as it exists today is not safe for kids under 16,” Ready wrote on LinkedIn. “We need clearer rules, better tools for parents, and more accountability across the tech ecosystem.”

💔A 9-year-old in Texas died after attempting a social media challenge she had seen online. JackLynn Blackwell passed away on February 3rd after attempting the blackout challenge, a dangerous trend that has been circulating on social media platforms for years. The CDC has documented at least 80 child deaths connected to this challenge. We don't share this to frighten you — we share it because awareness is a form of protection. Dangerous viral challenges are rarely announced; they spread quietly through feeds and group chats. Knowing what's circulating and having an open line of communication with your child can make a difference.